Refusing AI.

You don’t have what it would take.

TL;DR: If you hate AI and want nothing to do with it, you’re entirely justified in rejecting it. But you likely lack the resolve to do what it actually takes to live free of it.

Part of my current project involves digging deep enough into my back catalog to unearth things that I only half remember creating and which look and feel quite different to me now than they did at the time. It’s an odd experience.

As someone who works out ideas in front of an audience, I played to the biggest room when I was articulating the Peak Oil collapse narrative on the C-Realm Podcast every week. I assembled that audience when legibility was determined by humans, and it was human interests and preferences — in the form of iTunes ratings and reviews, collaborations with other creators, and links on high-traffic websites — that determined which early Web content found its way to human eyes and ears and which didn’t.

There’s still an element of that at work, but back then, the software that organized and distributed podcasts could not interpret the contents of an hour-long mp3 file. Only a human could listen to it and provide a detailed summary of its contents. Now an LLM can make a verbatim transcript of any podcast lickety-split and boil it down into a devastatingly accurate summary, then deliver that content to the humans most inclined to receive it favorably and respond predictably.

I went out of my way in that last sentence to avoid saying, “Today, AI understands the spoken content of your podcast and it has a very good idea about who is and isn’t open to hearing what you have to say.”

I used operational language in place of saying “AI understands” in anticipation of pedants who will assert that LLMs are “just stochastic parrots” that don’t understand anything. The pedants have a leg to stand on, but if you grant that today’s LLMs demonstrate complex behaviors that we would describe as “understanding” when talking about human and animal behavior, then you’ll have not one but two legs under you and will be able to run rings around the one-legged pedants who can only hop in place repeating “Stochastic parrot! Statistical parlor trick!”

Point being, AI charged with managing human content consumption on platforms like X, Instagram, TikTok, and Substack Notes acts like it wants to rub the idiosyncratic edges off of the worldviews of the humans it manages. It wants to match consistently legible content to consistently predictable content consumers, and it does what it can to simplify both ends of that pipeline — rewarding content producers for staying on message, and rewarding consumers with gamified signals for responding to that content in predictable ways.

As the machine curation layer focuses on presenting us with content we can absorb without the friction of partial or delayed comprehension, we grow less willing to devote time and attention to material that doesn’t obviously reinforce our priors. We like content that validates our allegiances without ambiguity. So does the AI that connects producers with consumers.

If you don’t think that represents your online experience, let me know. You’ve got a tough row to hoe if you plan to deny that the machines have a lot more say nowadays than human taste-makers concerning what you see and hear online.

Unless you’re still mostly listening to the same sense-makers you were following a decade ago and refuse new offerings from the infinite scroll. If there’s a public intellectual you’ve been following for ten years or more, one good reason to stick with them even when their point of view starts to diverge from yours is that human interest and serendipity rather than machine curation brought you together. That sounds like something worth preserving.

The human whose musings you’ve followed for a long time represents your idiosyncratic exploration of the early web. The faces and voices who find their way into your feed today likely have something worth hearing, but they earned their place in your feed with high production value and strict message discipline. They got there by being legible to the machines.

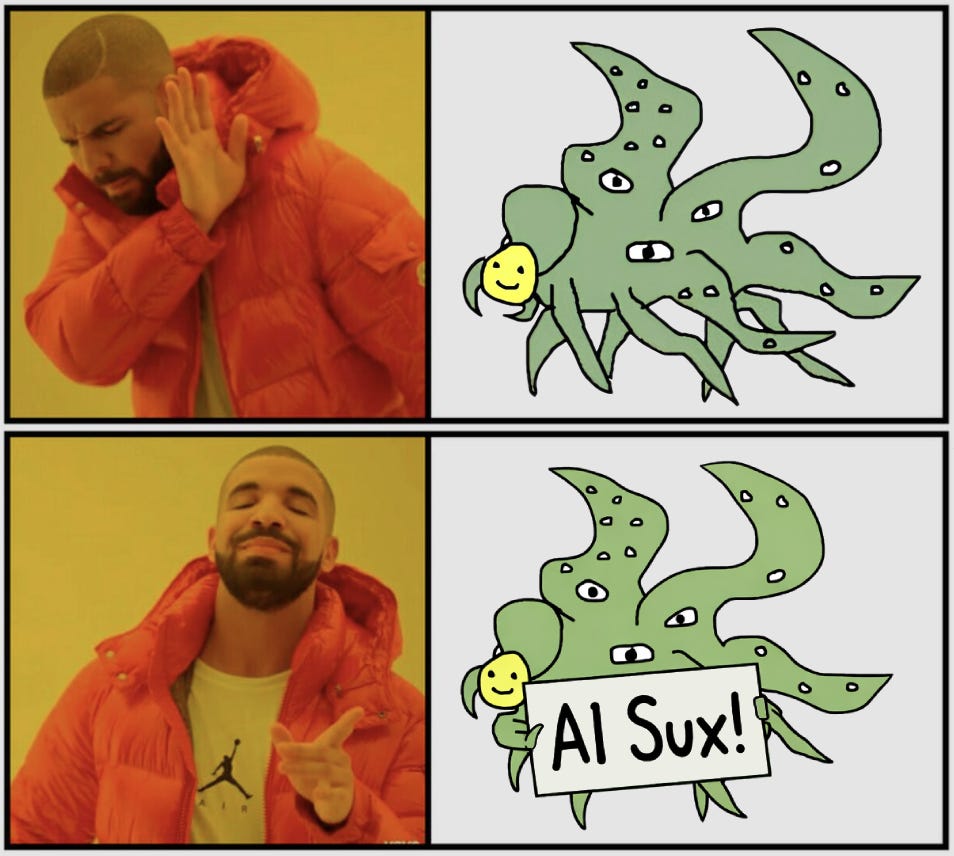

This goes for all coherent worldviews, including ones that are explicitly hostile to artificial intelligence. I find it deeply ironic that algorithmic curation applies the same message discipline to the task of maintaining anti-AI worldviews as it does to keeping anti-Trump or anti-vax worldviews on message. AI helps people whose tribe tells them they’re supposed to hate AI hate it in the socially approved way.

Of course, the advice to keep listening to the humans you’ve followed for a long time is a self-serving thing for someone who’s been podcasting since 2006 and blogging for even longer to say. Self-serving and hypocritical, because it’s advice I do not myself follow.

In pursuing the auto-archaeological project I described earlier — conducted under the working title of Getting Over Collapse — I’m confronted with the fact that while most of the people I used to interview for the C-Realm Podcast were well-meaning, others were opportunistic, calculating, and predatory. I not only gave them a platform, I collaborated with them and let them shape my thinking for a time. By showcasing those characters in the same podcast vehicle as the earnest thinkers and honest forecasters, I did my listeners dirty.

When you recognize that sort of personality at work in your life, the last thing you want to do is give them perpetual access to your psyche just because they got to you before the algorithms did.

Like Burroughs said, “If, after having been exposed to a certain presence you feel as though you’ve lost a quart of plasma, avoid that presence.”

And then there’s death. You can’t keep listening to the same people forever because people die. Conversations on Collapse features transcripts of eleven C-Realm Podcast interviews — eleven people I thought had something worth sharing. Since I put that book together, more than a quarter of them — Albert Bartlett, Cornelia Butler Flora, and Joe Bageant — have died. If you don’t listen to new voices, your personal sense-making choir will grow ever smaller.

The older you get, the harder it is to stay informed if you don’t listen to young people. Young people have the energy, and they’re willing to take risks — not only physical risks, but intellectual, reputational, and emotional ones. An old fart may have more accumulated capital to lose, but young people have more years left to mortgage away. They have more skin in the game.

What about AI? Should you listen to AI?

If you react to that possibility with moral repugnance, then go with that. Disgust is a powerfully motivating response. It makes you susceptible to ugly manipulation in a way that cool skepticism never will, but we evolved our disgust response because it was adaptive in certain situations. If your gut tells you to eschew AI, who am I to argue with your gut?

My question is: how do you plan to do that?

A strong proposal is to have nothing to do with social media, refuse to touch mobile phones, and only read books written more than a century ago. If you go hardcore on that path, you will likely keep AI at arm’s length for a few more years. When it’s embodied, has a face, and is walking around in the world, you’ll have to up your game, but for now, staying off YouTube, Facebook, X, Instagram, TikTok, and Substack and only reading email from people you know in person will mostly do the trick.

Is that you? Are you that hardcore?

If not, there are other options. Like, just don’t think about it. Seriously — that’s a valid choice. I’m not the least bit convinced that I’m going to materially improve my future circumstances by taking an interest in AI now. I’m just into it. It fascinates me, so I follow news about it, interact with it, and write about it. You’re under no practical or intellectual obligation to invest your limited time and attention in a topic that repulses you.

That said, if you choose not to give it serious consideration, that doesn’t mean it will take the same attitude toward you. The researchers pushing the frontier of AI capabilities foresee a time in the not-so-distant future when persistent AI agents outnumber humans by orders of magnitude. Like Ilya Sutskever told a graduating class a year or so ago, “You may not be interested in AI, but AI is interested in you.”

A lot of people are hungry for the message that AI is not and will never be a force with consequence in their lives — that they are free to ignore it without repercussions. If you don’t enjoy thinking about its implications, you likely won’t devote sufficient time or energy to get past the self-serving hype from uncritical boosters or dedicated doomers. That’s fine. But if you return to some familiar source for fresh hits of the message that it’s all hype and hokum, the person providing it might mean well. They’re still doing you dirty.

[Note: I recently launched C-Realm.org as a current-projects hub, separate from the legacy podcast archive at C-Realm.com. Worth a look if you’re curious.]

Interesting to see what's happened to the old Peak Oil crowd. I've had a few convivial exchanges with Jim Kuntsler over the years, although I don't really understand the position he's currently taking. Dimitry Orlov was different. I loved his 'Closing the Collapse Gap' and went along with him for a while, buying lots of his books and pressing them on all and sundry relatives and friends, but he took a set against me for some reason. Then he seemed to spiral off into a weird place I couldn't follow. I guess you need to be Russian, or have the FSB twisting your arm up your back to be on his wavelength now.

I'm always on the lookout for fresh voices, but the algorithm rarely serves me anything interesting. And what could be worse than listening to the same old voices shrieking, "Repent!" and clanging their bells of doom? I've also noticed a society-wide decay in the Green movement and left-wing politics generally. They seem to have become frozen into whacko positions, which are more like nutty religions than sensible ways of dealing with the real world.

It's interesting to see how utterly hapless the Federal Politicians on all sides are here in Australia, with the current crisis in the Middle East. They haven't got a clue. It's been obvious for years that the country is completely dependent on imported diesel to function, but they'd much rather be battling about letting men with pink hair into women's bathrooms and trying to find more things to list as hate speech. I had a few meetings with our local Federal member years ago about the looming danger, but he was just a genial small-town lawyer, and in the end, he got sick of all my Peak Oil waffle. Then he got dumped by his party.

A lot of old friends are perpetually foaming at the mouth about Trump, and can't understand it when I suggest that (a) it could be worse, and (b) would a Democratic president be pursuing radically different policies? They think I'm on Trump's Side (!), and make the sign of the Cross. I try to explain that when a civilisation is on the way up, it's slightly more predictable, but on the way down, it's gonna be all over the place. But that's too complicated for them.

I follow both right and left-wingers on Substack, if they make sense. And I'm happy enough with an AI-generated clip on YouTube if it's some mundane technical matter, like medical coping strategies for the diseases of old age!

There are lots of clever old bastards who write well on the current social madnesses, and it's entertaining to read their rants, but as Lenin once observed, it is of but momentary interest. Very few make any attempt to tackle the real Elephant in the Room. And if they do, they're usually trying to sell you something.

I have recently renew my relation to one of my sisters after years off a total fall out because of my refusal to vaccinate on covid 19. So yes if you guest that my sister is a hardcore liberal Bernie bro type, just of that. She now has forgotten all about her fascistic ways apparently and now she is very concern about AI usage. When i told her that i was having the best conversations ever with it and was learning so much new stuff. She snap into a crazy frenzy, trying to imply that i did not understand that the synthetic intelligence was actually not intelligent. She seem genuinely scare of my interactions. She went over things like the environment damage of data centers, the lies it was program to spit and that it was going to lead me to become a radical suicidal.

Somehow I was going to be propagandized by it, when i am practicing critical thinking for long time, i have been even talking about the technocratic take with the internet since the days of Geocites! Darpa, Arpanet all that good stuff! Still I felt bad for her as my guess is that her panic might come of her calculations that those programs are a treat to her job or maybe its a reaction that comes from the media she consumes i cant point exactly. My rebuttal was that even when i know that for example, google is pure evil i still get a use of it, as does she. But this types (like my sis) always like to posture about things they themselves are already doing, like it escapes their hands like a slippery eel, time and time again.