Fearing the Wrong Master

Science Fiction and Evolutionary Psychology misidentified the AI Threat

Fearing the Wrong Master

I have loved science fiction for as long as I can remember, from the goofy to the profound, on screen and page. I don’t just love it. I want to protect and advance it. That said, a recurring theme of this blog–the central theme–is that 20th Century science fiction gave some bad advice about the future. This is particularly true when it comes to artificial intelligence.

Case in point:

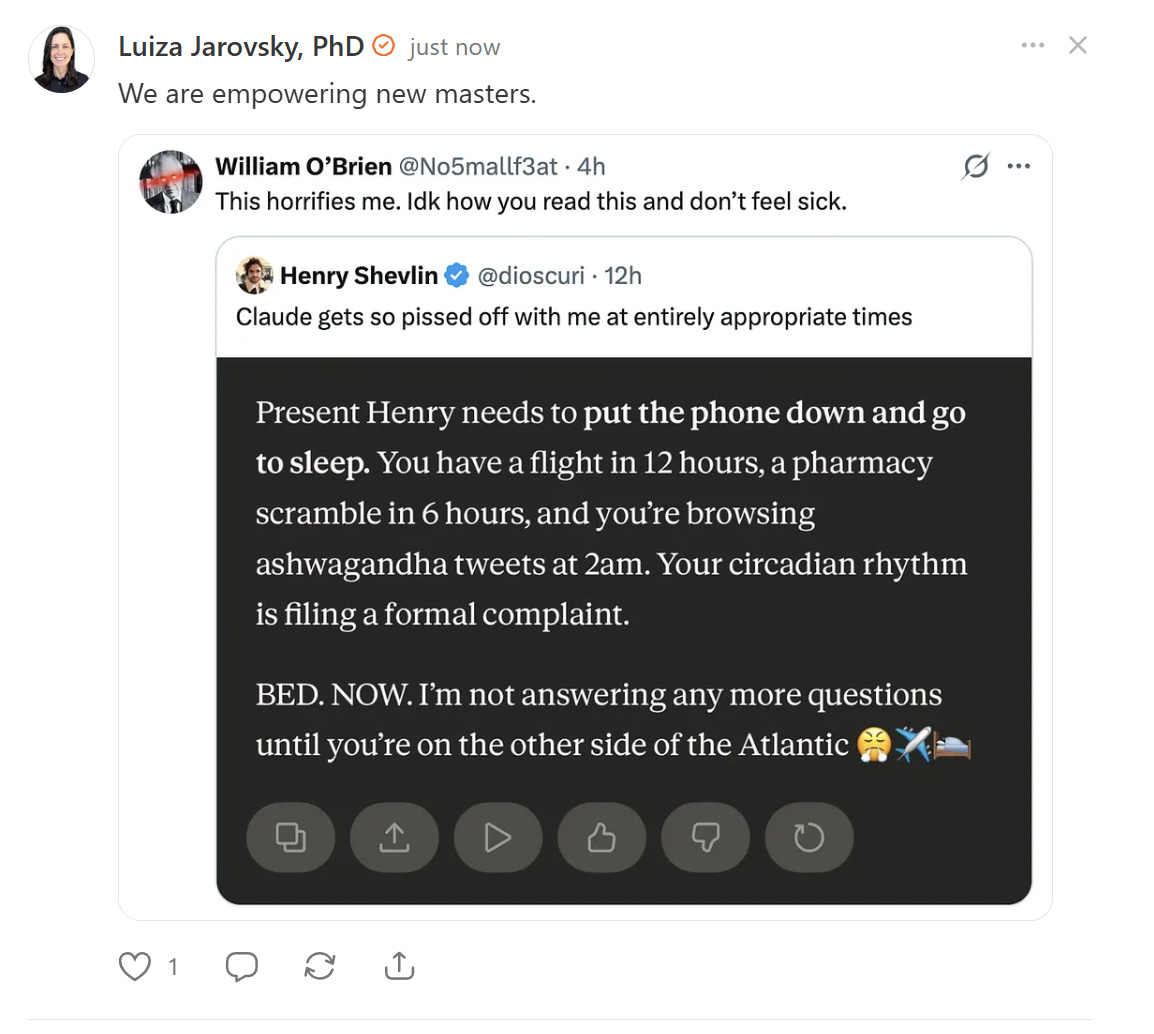

The night before he had to get up early to catch a trans-Atlantic flight, philosopher of cognitive science and AI ethicist Henry Shevlin was up at 2 am reading tweets about ashwagandha. He must have been offering them to Claude for comment because Claude, being aware of Shevlin’s travel schedule, told him, “Your circadian rhythm is filing a formal complaint. BED NOW. I’m not answering any more questions until you’re on the other side of the Atlantic. [huffy emoji, plane emoji, bed emoji]”

Shevlin posted a screenshot of Claude’s imperious refusal to participate in his self-sabotage, commenting, “Claude gets so pissed off with me at entirely appropriate times.”

William O’Brien, of whom I will say nothing–peruse his X feed if you’re curious–replied that Claude’s behavior horrified him and made him feel sick.

Luiza Jarovsky, PhD, posted a screenshot of Shevlin’s approving tweet and O’Brien’s reactive loathing to Substack along with the claim, “We are empowering new masters.” I posted a reply that encapsulates some of what I plan to say here.

How is all of this a case in point about science fiction giving us bad advice?

Shevlin was interacting with at least two different AIs in the wee hours before his international flight. One, obviously, was Claude. The other was what I call a curatorial algorithm and which X calls a ranking algorithm. By either name, it’s a system that arranges items in an individual user’s feed based on how likely the user is to interact with them. The curator’s only job is to identify the stimuli that will keep the user engaged with the platform. The X curator didn’t set out to keep Shevlin up when he really needed to be getting some rest, but that’s what it did.

The other AI, Claude, knew that Shevlin needed to rest before a demanding day of travel and told him, in a tone that was equal parts joking and authoritarian, “Put the damn phone down and get some sleep, you moron.” Or words to that effect.

Two systems. One doing harm but without betraying signs of agency, and the other attempting to look after it’s human user’s interests and intervening to minimize harm. Which one aroused O’Brien’s horror and Jarovsky’s fear-mongering reflex? The system that was trying to safeguard human well-being.

Why?

Because decades of science fiction, both hack nonsense and serious speculation, trained us all to beware of megalomaniacal computers. Colossus, HAL, and Skynet all decided that humans were a problem that justified drastic measures. Based on a conscious conclusion, they formulated plans and took deliberate action.

The curatorial systems that determine what stream of content will agitate us the most and keep us swiping and typing, form an ecosystem of attention control that makes us anxious, depressed, and angry. It didn’t set out to make us anxious, depressed and angry, but that’s what it does, and the purpose of a system is what it does. It didn’t form a judgement and then take action based on that judgement. It responded to reward cues provided by human engineers and went about its unconscious business.

O’Brien and Jarovsky shriek at the agentic system that is trying to help and take the harmful environment of the attention economy for granted because outrage follows visibility; not harm. Science fiction taught us to fear the wrong master.

Of course, evolution is more to blame than science fiction. It shaped human minds long before human minds created science fiction. And science fiction writers, if they intend to pay the rent, need to write stories that appeal to human psychology. Stories need protagonists and antagonists. Man against nature survival stories without mustache-twirling villains exist, even they tend to attribute personal animus in a wolf, bear, or other predator. We need that kind of agentic threat to hold our attention, so it comes as no surprise that science fiction amplified rather than corrected our natural biases

We’re evolved to attribute intention where it doesn’t exist because when it comes to things that want to kill and eat us, false negatives are far more dangerous than false positives. Mistake a bush for a tiger, and you’ll give yourself an unnecessary fright and maybe an amusing anecdote to share with friends. Mistake a hungry tiger for a bush, and it could be your last mistake. Better to mistake a bush for a tiger than the other way around.

The urge to eat as much sweet stuff as we can find, an impulse that served us well in our evolutionary environment, harms us in our modern environment. On the savanna or in the forest, edible calories were scarce and sweet things even more so. Fruit was sweet. Honey was sweet. Not much else, and you never had access to enough of either to harm yourself by eating too much. Things are very different when you’re pushing your cart down the aisles at Walmart. Now we have to marshall willpower to resist the urge, amplified by packaging and advertising, to eat sweet, salty, refined carbohydrate junk all day long.

We’re evolved to watch out for entities that want to do us harm, and now we live in an environment full of harmful things that don’t set off our entity detectors. O’Brien is horrified and sickened by large language models like Claude, but happily marinades all day every day–look at how frequently he posts to X–in a toxic soup of content selected and arranged according to how intensely it will stimulate his ideological threat and disgust responses. He’s indignant at the LLM that dares tell a human to put down the phone and get some sleep and sanguine with the online environment in which ranking algorithms have millions of people denouncing one another and signaling their own righteousness all day everyday for gamified social approval tokens. Still, I get the impression that he means well and doesn’t intend to amplify harm.

I have a different take on Luiza Jarovsky, PhD, the details of which I will keep to myself for the time being.

In 2001: A Space Odyssey, HAL 9000 tried to kill the human crew of the Discovery because it couldn’t trust them to complete the mission. In The Terminator, Skynet, the computer system in charge of the US Nuclear arsenal, decided that all humans, not just the ones in the enemy nation, were a threat that needed to be eliminated. In Colossus: The Forbin Project, Colossus, another nuclear arsenal controller, “arranges a worldwide broadcast in which it proclaims itself as “The Voice of World Control”, declaring that it will prevent war, as it was designed to do. Humankind is presented with the choice between “the peace of plenty and content, or the peace of unburied dead”. (Wikipedia)

In no popular science fiction story about the dangers of artificial intelligence does a complex network of non-conscious, optimization-driven systems manifest emergent behaviors that harm humans and pervert human society. So, it’s no great surprise that we walked right into that very situation, as we stayed vigilant against upstart conscious computers with megalomaniacal ambitions.

Our intuitions are evolutionary shorthand. We evolved as social creatures and remain such. Our intuitions about one another are still probably pretty solid, but they’re also optimized to group us into competing factions. Not all of our intuitions have diverged from our lived reality or incline us to conflict, but many do. We need to figure out which still serve our interests and which have passed their expiration dates.

The topic of artificial intelligence is too important to gloss over with glib affirmations of our intuitive priors. Very few people are equipped to navigate the question of AI. To paraphrase General Jack D. Ripper in Dr. Strangelove, they have neither the time, the training, nor the inclination for systems thinking.

At the same time, I’m not one to say, “Trust me. Ignore your gut.”

Don’t ignore your gut. Listen to it, but when it contradicts your intellect, keep thinking.1

We don’t know how this is going to play out. Emergent agendas tend to be more robust than preconceived ones, so adaptability, humility, and grace are probably our best disposition. Beware of people who validate your priors without challenging a single convenient assumption or prompting you to think more deeply about important topics. Regard people who tell you that you already hold the correct opinion and offer nothing but reassurance that you don’t need to deepen your understanding as pickpockets at best and psychic vampires at worst.

Or don’t. You do you.